The Great Local LLM Shift: Why Cloud Prompting Fails on Your Desktop

The recent surge in accessibility to powerful local Large Language Models (LLMs), often facilitated by user-friendly interfaces like LM Studio, has democratized AI development. However, a critical systemic error is emerging: users are treating these bespoke, often smaller-parameter models exactly like their massive cloud counterparts, such as GPT-4. This approach is fundamentally flawed because local models operate under different constraints and architectures. While a cloud model handles ambiguity and massive context windows with ease due to sheer computational scale, a local deployment, perhaps running a quantized 7B or 13B parameter model on consumer hardware, requires precision. Treating a locally hosted LLaMA variant like a fully realized SaaS product neglects the optimization necessary for inference speed and contextual adherence on constrained resources.

The core difference lies in the underlying infrastructure. Cloud providers leverage virtually unlimited, distributed GPU clusters, allowing for highly forgiving, stream-of-consciousness prompting styles. Local users, meanwhile, are often balancing performance against VRAM utilization. When you send a dense, vague prompt expecting complex multi-step reasoning, you stress the local model beyond its optimized state, leading to incoherent or hallucinated outputs. The revelation highlighted by recent technical analyses is clear: to maximize the performance of open-source models running on a desktop PC, the prompt structure itself must become an explicit contract between the user and the inference engine.

Beyond the Chatbox: Mastering Context Formatting for On-Premise Power

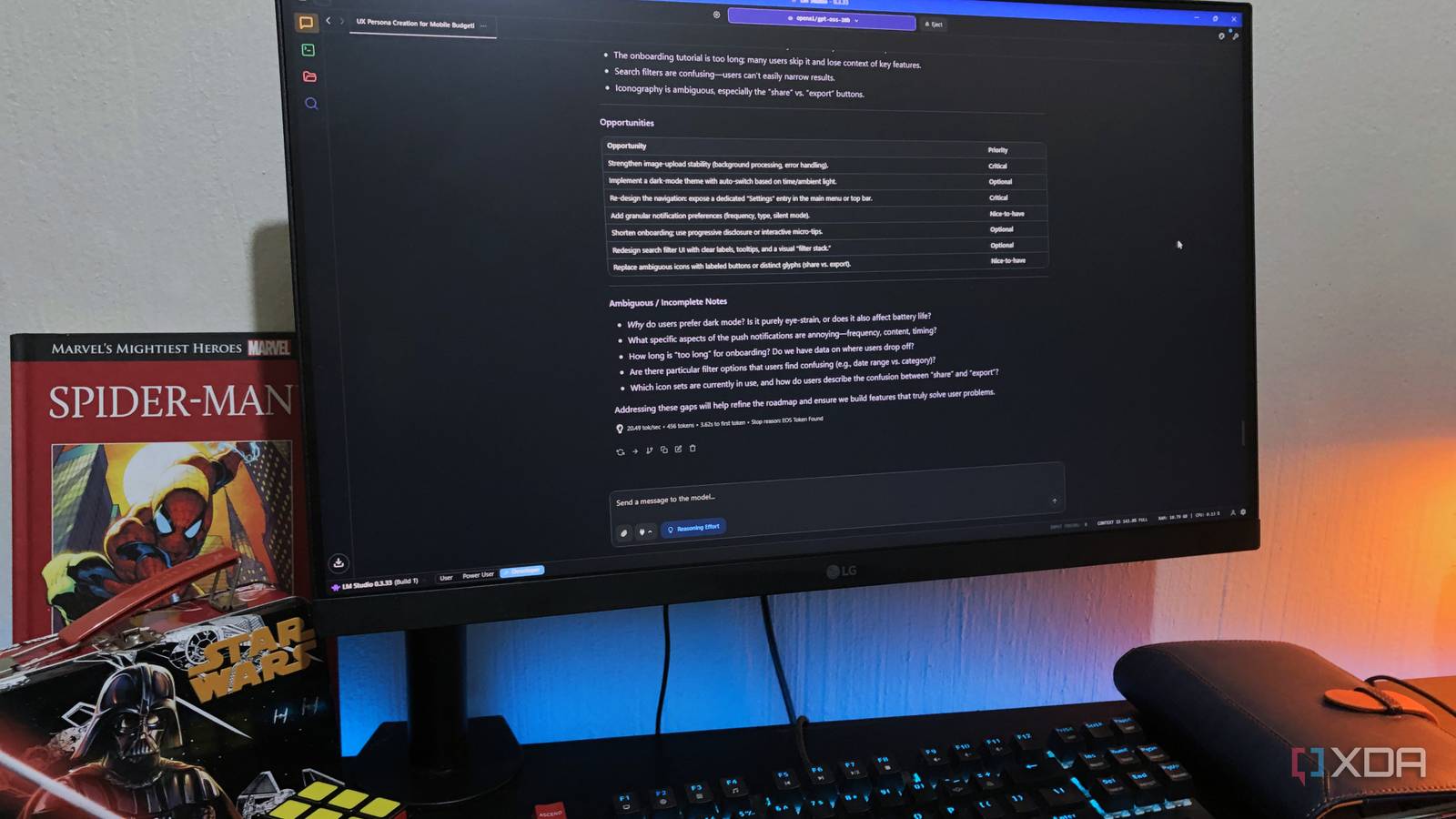

Effective local LLM utilization demands an understanding of the specific chat template or instruction format the model was fine-tuned on. Cloud-based models often internalize complex templating behind the scenes, but when using an interface like LM Studio, users must explicitly adhere to formats like the ChatML structure (e.g., <<SYS>>, <<USER>>, <<ASSISTANT>>). Failure to correctly structure these tokens results in the model misunderstanding conversational boundaries or system instructions, severely degrading output quality irrespective of model strength. This isn’t merely stylistic; it’s architectural.

Consider the implications for advanced tasks. If a local model has been specifically instruction-tuned for coding, supplying code blocks without the expected separator tokens (like those seen in the instruction sets for models that nearly match performance benchmarks like SWE-bench) prevents the model from accurately entering its specialized reasoning pathways. This contrasts sharply with the cloud environment where the API interface abstracts away these token requirements. For developers aiming to achieve parity with cloud service response quality, mastering these precise formatting conventions, often dictated by the model card, becomes non-negotiable for achieving high fidelity results.

The Parameter Paradox: Why 744B Isn’t Necessary When Prompting is Perfect

The industry conversation often chases ever-larger parameter counts—the move from earlier 397B models toward the massive 744B figures reported in some experimental releases. While size undeniably correlates with capability, the current bottleneck for most technically savvy users is usability on commodity hardware. The true breakthrough for local AI isn’t just bigger models; it’s using smaller, highly optimized models effectively. A well-prompted 7B model, correctly guided by system instructions and limited context, can outperform a poorly prompted 70B model in specific domains.

This efficiency argument directly impacts real-world cost and deployment. While cloud LLMs charge exorbitant rates—sometimes cited near $0.28 per million tokens for specific high-end services—running a local model incurs zero marginal inference cost beyond electricity. Therefore, optimizing the prompt to get the desired output in the first attempt, rather than iterating five times with vague inputs, directly translates to faster development cycles and maximizes the return on the initial hardware investment. The goal shifts from brute-forcing complexity via scale to surgical precision via advanced prompting techniques.

Benchmark Realities: When Local Models Compete on Enterprise Tasks

Recent benchmarks involving intricate tasks are illuminating the local LLM landscape. While models striving for top-tier performance might showcase impressive results on metrics like ARC-AGI-2, the real test for local adoption is practical application, often measured by specialized benchmarks like SWE-bench for code generation. Cloud models generally set the standard here, but specific instruction-tuned local variants are rapidly narrowing the gap, provided the user’s environment is correctly configured.

This competitive tension necessitates a dual strategy for developers. First, rigorously test the local model’s native instruction following using its specific prompt format. Second, understand its limitations regarding context bleed. If a prompt is too long or complex for a quantized model, it might disregard early instructions to accommodate later ones. This awareness forces developers to segment complex requests into sequential, chained prompts—a practice rarely necessary when leveraging the vast context buffers typical of cloud APIs.

Actionable Takeaways for the Local LLM Enthusiast

To transition from frustrating local experimentation to genuine productivity, enthusiasts must adopt a new prompting mindset. Stop treating your local LM Studio instance like a free pass to the cloud’s intelligence. Instead, view it as a high-performance, manually tunable engine requiring bespoke inputs.

- Define the System Role Explicitly: Use the model’s required system token structure to lock down the AI’s persona and constraints before the user input begins.

- Minimize Verbosity in Iteration: If an output is wrong, refine the instruction within the existing context window rather than appending long explanations, which can exhaust limited token space.

- Validate Template Adherence: Always verify that the underlying local model requires specific markers (like <<SYS>> or specific line endings) and include them faithfully, treating them as mandatory code syntax.

Note: The information in this article might not be accurate because it was generated with AI for technical news aggregation purposes.

Leave a Reply