Tag: LMStudio

-

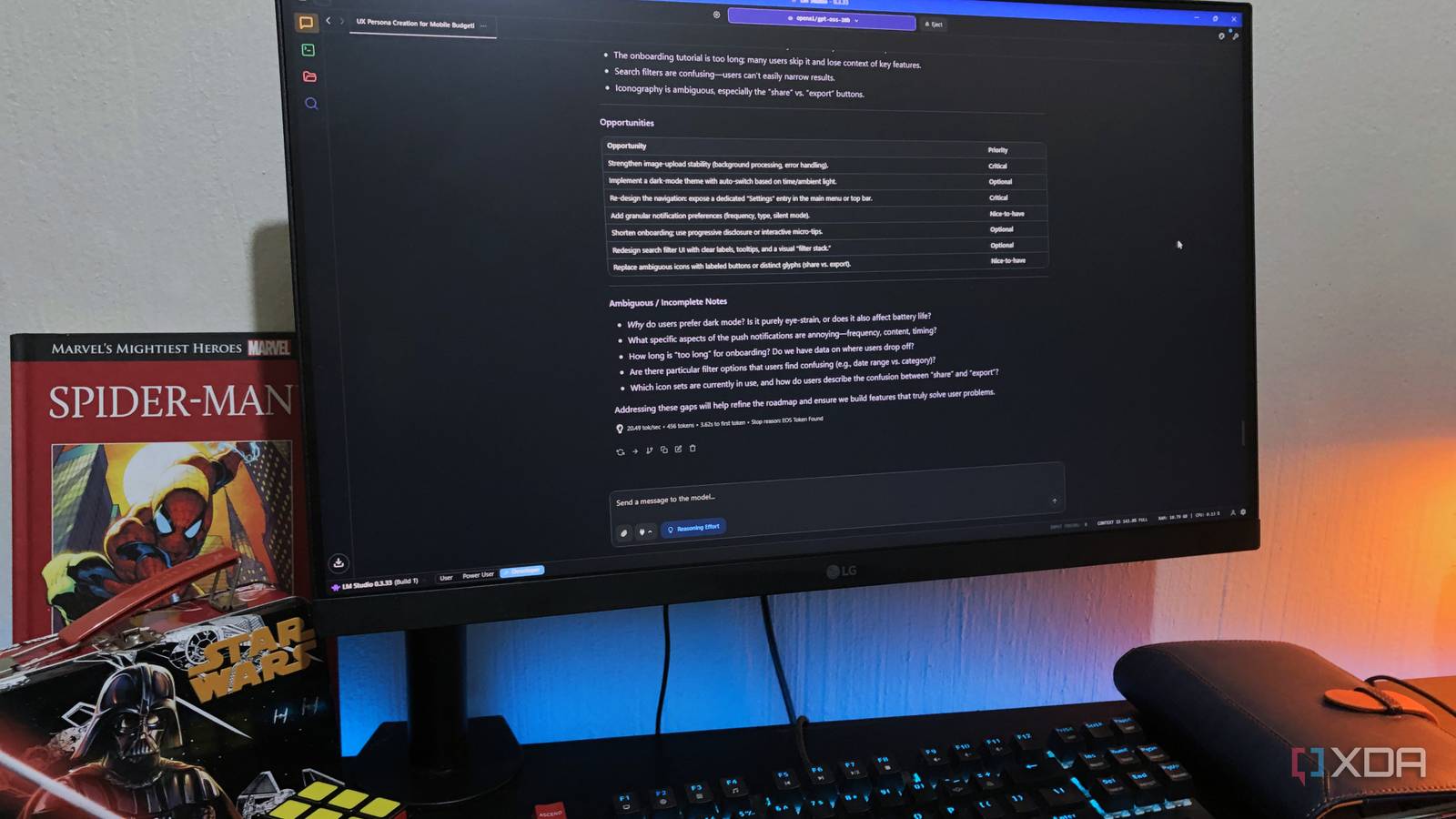

STOP Feeding Your Local LLM Like ChatGPT: The Hidden Prompting Secrets Cloud Giants Hope You Never Discover

Local LLMs demand precise prompt structuring, unlike forgiving cloud APIs, requiring explicit adherence to system tokens for optimal performance. Users must abandon generalized cloud prompting habits to unlock the efficiency and zero marginal cost benefits of on-premise inference. Mastering chat templates is the bridge allowing smaller parameter count models to effectively compete on tasks usually…